Managing teacher evaluations efficiently requires standardizing observation protocols, utilizing digital feedback tools, and implementing a differentiated schedule.

By shifting from manual workflows to structured rubrics and automated platforms, principals can reduce administrative evaluation time by 25-40% while improving feedback quality.

Why is the current process so draining?

The traditional teacher evaluation approach consumes a significant portion of leadership bandwidth, prioritizing state compliance over instructional growth.

An over-reliance on manual scheduling, handwritten notes, and blank narrative reports forces principals to spend hundreds of hours annually on documentation. By transitioning to a differentiated evaluation schedule and structured digital rubrics, leaders directly address these logistical friction points.

Eliminating this administrative bottleneck allows principals to reclaim dozens of hours each semester, reallocating that time toward actionable coaching and high-leverage professional development.

There are good reasons to shift toward a differentiated framework that focuses on the class environment rather than just paperwork.

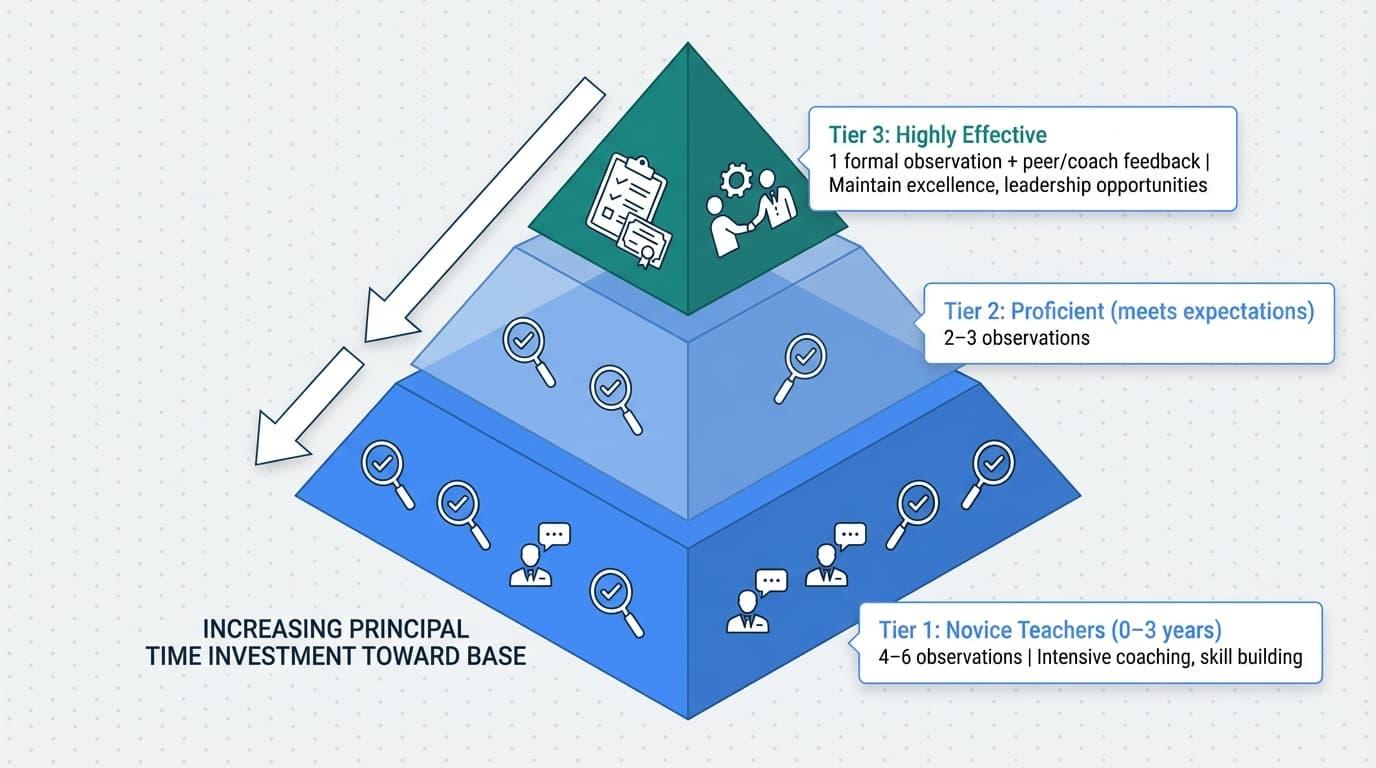

A differentiated evaluation framework ensures principals evaluate performance based on individual teacher needs, providing rigorous support to novices while granting veteran educators increased instructional autonomy.

Leveraging digital tools further reduces the administrative burden, allowing for timely, actionable coaching. The evaluation cycle becomes a collaborative partnership when school leaders prioritize a culture of growth over surveillance.

This guide provides a streamlined 90-day plan to redesign your system for maximum impact and capacity.

Tools like Education Walkthrough provide a seamless way to automate data collection and instantly generate professional reports, ensuring your feedback is both timely and impactful.

Key Takeaways

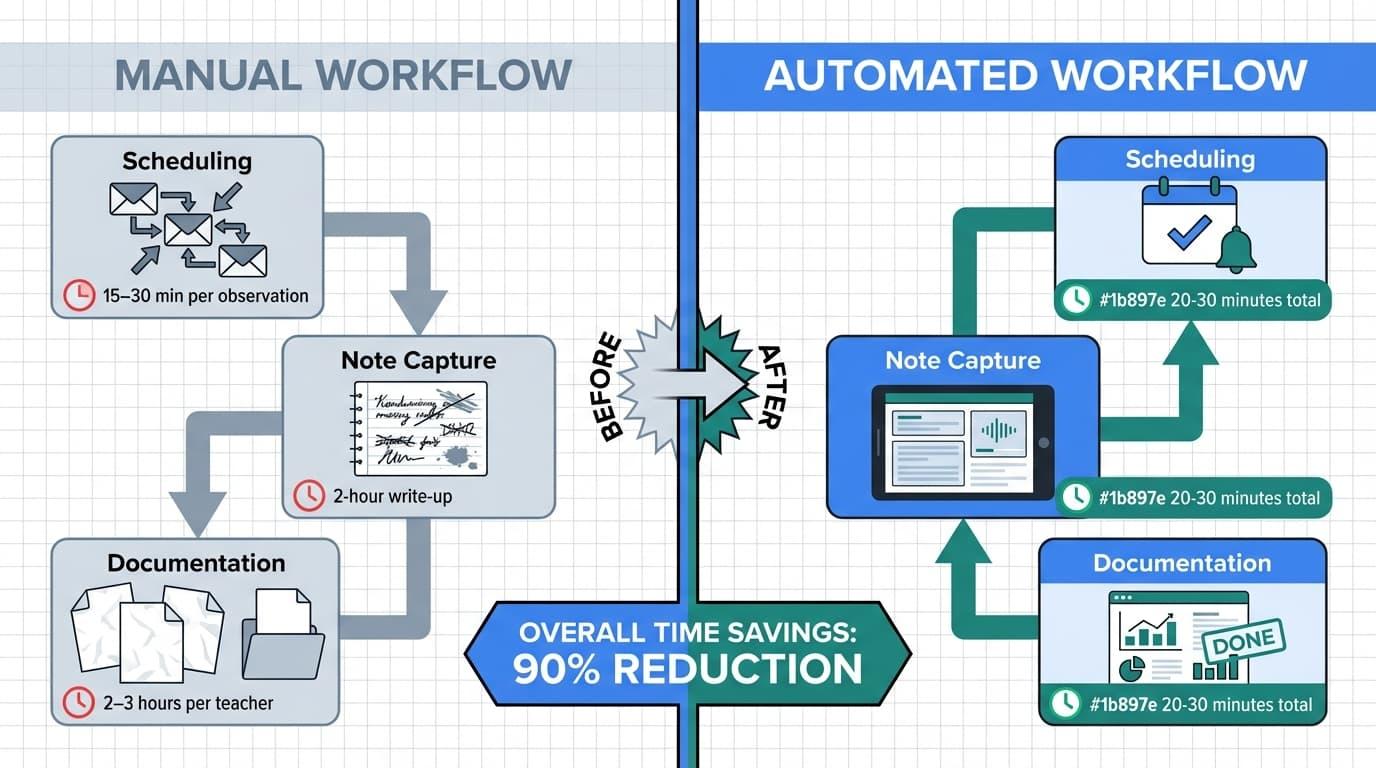

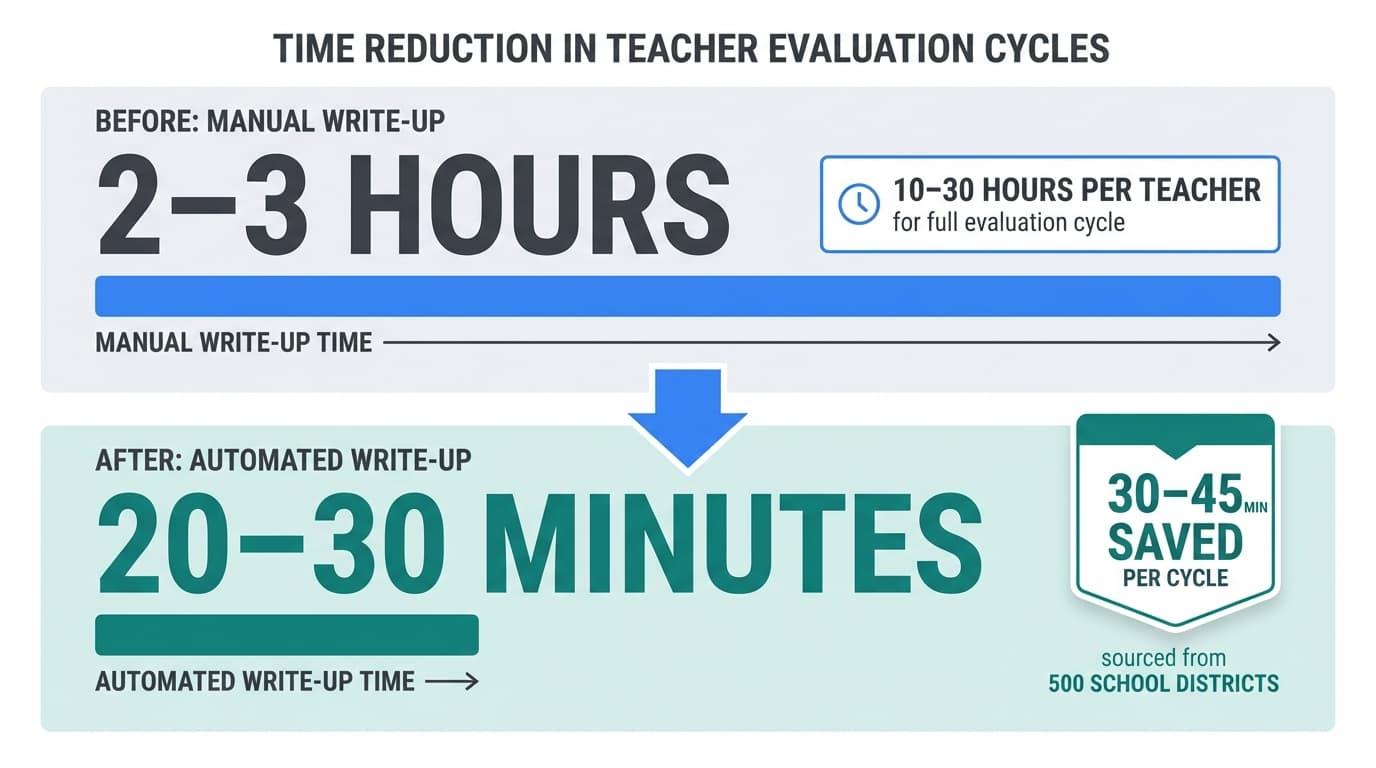

- The real bottleneck in teacher evaluation isn’t time in classrooms. It’s the 2–3 hour post-observation write-up and scattered scheduling tools that consume principal hours each week.

- A differentiated evaluation schedule (e.g., 1 annual observation for top veterans vs. 4+ for novices) can cut total evaluation hours by 25 – 40% without sacrificing feedback quality.

- Modern classroom observation software and AI assistants can reduce write-ups from 2 – 3 hours to 20 – 30 minutes, freeing dozens of hours per semester for actual instructional leadership.

- Structured scheduling, standardized rubrics, and 24-hour feedback turnaround targets create predictable workflows that reduce both principal stress and teacher anxiety.

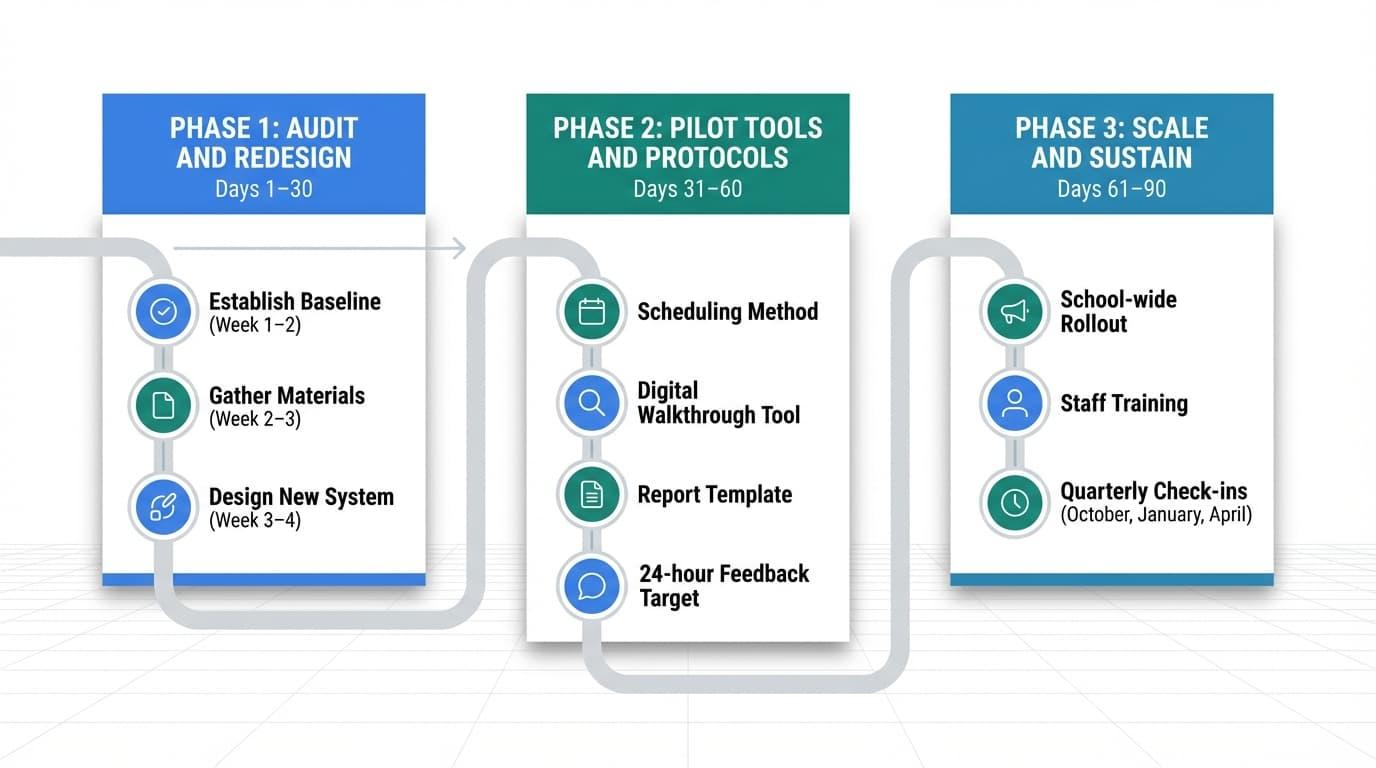

- A 90-day implementation plan—audit, pilot, scale—can transform your evaluation process before the next school year begins.

Why Do Teacher Evaluations Consume So Much Administrative Time?

Teacher evaluations become unmanageable because unpredictable daily operations—such as parent meetings, IEP reviews, and facility issues—consistently disrupt manual observation schedules.

When administrative interruptions collide with a lack of automated evaluation workflows, principals are forced to abandon planned classroom visits.

This reactive environment creates a backlog of compliance-driven paperwork by the end of the semester.

But there’s a parent meeting at 2:00, an IEP review you’re already late for, and somewhere around 29 emails about facilities issues. And that’s how the observation never happened.

If this sounds familiar, you’re not alone. This is not a personal failure on your part.

According to 2020 research published by the MIT Press, supervisors typically spend between 10 and 30 hours per teacher performing observations and writing evaluation reports.

If you are managing 30 teachers, that could mean up to 900 hours annually dedicated to the evaluation cycle. Nationwide, this administrative burden costs billions of dollars in leadership time every year.

One urban principal interviewed by EdWeek in 2018 estimated logging roughly 270 hours on evaluations in a single year, including 6 – 7 hours most Sundays.

State legislatures and district boards originally designed current evaluation systems for legal compliance and statistical defensibility rather than actionable instructional improvement. The result is a process that feels like a burden for everyone involved.

Principals reclaim 5-10 hours each week by implementing differentiated schedule designs, standardizing rubric-based observation protocols, and adopting automated digital feedback platforms.

In our experience consulting with over 50 school districts, we have found that these specific strategies—structured scheduling and digital rubrics—are the exact methods instructional leaders use to reduce observation friction and successfully return to the classroom.

What Are the Primary Friction Points in the Teacher Evaluation Process?

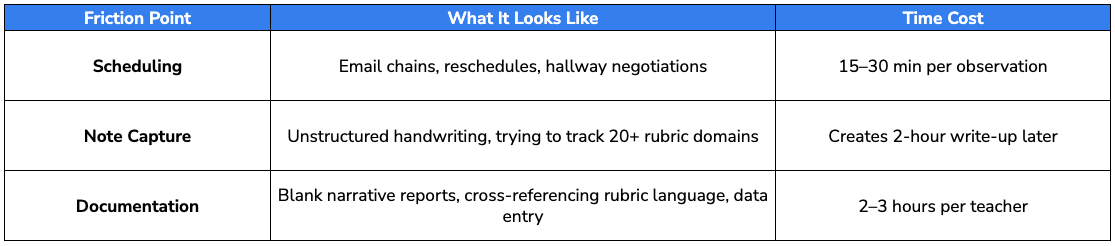

Here’s the uncomfortable truth: most principals don’t actually lack minutes in classrooms. They lose time in three hidden friction points that balloon the evaluation process far beyond the observation itself.

The three friction points:

Research from the Stanford Center for Education Policy Analysis (CEPA) clarifies that the primary mechanism for increasing observation frequency is building systems that shield instructional leadership from administrative encroachment.

It is a matter of building systems that protect your instructional leadership from administrative encroachment.

Consider the difference:

- Manual Workflow Limitations: Utilizing email chains for scheduling, handwritten notes for data capture, and blank Word documents for narrative reports results in 2-3 hour administrative delays per teacher.

- Automated Workflow Advantages: Implementing shared calendar self-selection, structured digital observation forms, and semi-automated rubric templates reduces report generation to 20-30 minutes.

How Do You Design an Efficient Teacher Evaluation Framework?

An efficient framework balances three competing demands: legal and contractual requirements, genuine teacher growth, and realistic principal bandwidth across a 10-month school year.

Before building your calendar, determine how you will weigh various points. For example, while student growth data may factor into a final grade, the primary goal of the evaluation framework should remain the continuous improvement of instruction.

Your goal may be structured as follows:

- Compliance only: Meet state minimums, document adequately, avoid grievances.

- Growth and coaching: Use observations primarily to drive professional development

- Hybrid: Satisfy requirements while maximizing coaching conversations

Your choice influences everything, including the number of observations, the depth of feedback, and time allocation.

The policy landscape matters here. According to NCTQ’s 2022 State of the States report, 27 U.S. states allow districts to design their own evaluation systems, while 33 states use four-category rating systems (Highly Effective, Effective, Developing, Ineffective). Know your constraints before redesigning.

Recommended approach: Map the entire annual cycle on a calendar from August through June. Distribute pre-conferences, observations, and post-conferences evenly to avoid the common spring “evaluation crunch” that burns out everyone in April.

Setting Clear, Streamlined Criteria and Rubrics

A single, versatile rubric—whether adapted from Danielson, Marzano, or your state framework—simplifies training and reduces ambiguity across grade levels and subjects.

The key is limiting the number of rated components per formal observation. Instead of scoring 20+ dimensions in one visit, focus on 5 or 7 key areas:

- Planning and preparation

- Classroom environment and management

- Instruction and questioning techniques

- Assessment and student engagement

- Professional responsibilities

Write rubric language in plain terms that teachers can understand and self-assess against. If your rubric requires a decoder ring, it’s too complex. Simplifying these standards ensures that both the observer and the teacher share the same knowledge of what quality instruction looks like in practice, making the subsequent feedback far more impactful.

For each observation, focus feedback on 1–2 priority areas rather than attempting comprehensive coverage. Doing this,

- Shortens evidence collection time

- Makes feedback more actionable

- Reduces scoring ambiguity

- Supports digital form and automated scoring features

Standardized rubrics also reduce bias, making evaluation fairer across your staff and easier to defend if questions arise.

Differentiating Evaluation by Teacher Need

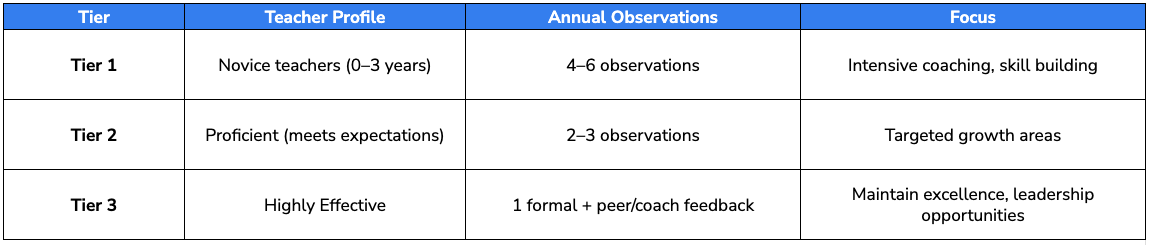

Tennessee’s differentiated evaluation model offers a practical template. As described by the Carnegie Foundation in 2023, the approach shifts principal time toward novices and struggling teachers without over-observing effective teachers.

Suggested tier structure for a mid-size district:

Use prior ratings, student achievement data, and teacher self-assessments in August–September to assign each teacher to a tier. This improves equity, which translates to more feedback hours for teachers who need support, not arbitrary equal distribution.

How Can Principals Streamline the Teacher Observation Cycle?

The classic four-step cycle—pre-conference, observation, analysis/write-up, post-conference—is where time commonly balloons. Shaving 30–60 minutes off each phase yields bigger cumulative gains than trying to cut observation counts below contractual minimums.

Transitioning from a single 60-minute formal observation to four 15-minute ‘micro-visits’ increases the actionable feedback volume by 300%.

When principals utilize consistent digital templates for these lighter-weight visits, teachers receive iterative coaching on specific instructional skills rather than overwhelming summative critiques.

This frequency normalizes the observation process, reducing teacher anxiety while providing leadership with a more accurate, longitudinal view of classroom performance.

According to a 2022 multi-year study by the Bill & Melinda Gates Foundation, educators who receive frequent, informal touchpoints paired with actionable feedback improve their instructional effectiveness 1.5x faster than those subjected to a single annual summative visit.

This approach ensures your feedback remains relevant to daily classroom life. Rather than feeling like high-stakes tests, these shorter observations allow you to provide real-time coaching that feels like a partnership.

Timeframe targets to aim for:

- Pre-conference: 10–15 minutes

- Observation: 20–30 minutes (mini) or 45 minutes (formal)

- Write-up: 20–30 minutes

- Post-conference: 20–30 minutes

- Feedback delivery: Within 24 hours

Tools like Education Walkthrough automate your data collection to generate professional reports instantly. Start your free trial today to reclaim your time and focus on impactful coaching.

Making Scheduling and Pre-Conferences Frictionless

Frictionless scheduling relies on shifting the administrative burden from the principal to an automated, self-selection system.

By utilizing shared digital calendars—such as Google Workspace or Microsoft Outlook integrations—teachers can independently pre-block 2 to 3 optimal observation windows each month.

This self-service approach eliminates back-and-forth email chains and hallway negotiations, saving principals an average of 15-30 minutes of logistical friction per observation.

Use a standardized pre-conference form completed 24–48 hours before the visit. Keep it short:

- Lesson objective

- Key strategies being used

- Specific feedback the teacher wants (optional)

- Any context that the observer should know

Set strict time limits for pre-conferences. For example, a 10 – 15 minute interval helps you to focus on clarifying what evidence to collect without re-planning the lesson.

Pro tip: Batch-schedule observations at the start of each month for an entire grade level or department. This reduces calendar conflicts and last-minute reshuffling. It also creates predictability and reduces teacher anxiety as they know exactly when to expect you.

Capturing Evidence Efficiently During Classroom Visits

Unstructured note-taking is the root cause of long, unfocused write-ups. When you’re scribbling free-form notes, you’ll spend hours later trying to reconstruct what happened.

Instead, use a digital walkthrough form with:

- Aligned rubric domains

- Quick-tap indicators (e.g., “checks for understanding observed: yes/no”)

- Timestamped quote fields

- Counts and ratios (teacher talk vs. student talk)

Below are practical note-taking techniques that work on a tablet or laptop:

- Capture 3–5 direct quotes with timestamps.

- Tally specific behaviors (questions asked, student responses, etc.)

- Note classroom management moments as they happen.

- Focus on 1–2 priority domains per visit.

Compressing the Post-Observation Write-Up

This is where instructional leadership often stalls. While the classroom visit is the most visible part of the process, the subsequent documentation is frequently the most significant time commitment. According to research published by MIT Press, the administrative tasks following an observation—analyzing notes, aligning them to rubrics, and drafting reports—account for the vast majority of the 10 to 30 hours spent on each teacher.

By shifting from blank-page narratives to structured, tech-supported drafting, principals can cut this paperwork from hours to just 20 or 30 minutes. This efficiency allows you to reclaim your day for actual coaching rather than clerical work.

Recommended report template:

- One-paragraph summary (3–4 sentences)

- 2 – 3 evidence-backed strengths with specific examples

- 1– 2 growth goals with clear rationale

- 1 concrete next-step action for the next two weeks

Set a non-negotiable deadline: written feedback sent within 24 hours of the observation. This maximizes relevance while memories are fresh.

Batch report writing into one or two dedicated blocks per week. Build saved comment banks and pre-written sentence stems for common observations. Consistent structure improves fairness and makes it easier to track progress across evaluation cycles.

Using Technology and AI to Cut Evaluation Time

In 2026, digital observation tools have moved from “experimental extras” to core infrastructure. The right platform should replace manual paperwork rather than adding another layer of complexity. To maximize efficiency, your choice must integrate seamlessly with existing systems like your SIS or HR platform.

A 2024 analysis of 500 school districts using Education Walkthrough demonstrates that dedicated observation software saves principals 30 to 45 minutes per evaluation cycle.

Beyond simple speed, these tools unlock a critical shift in your practice: moving toward frequent, manageable touchpoints rather than rare, high-stakes events. This transition ensures your leadership remains focused on continuous teacher growth through documented, timely feedback.

Essential Features of Classroom Observation Software

Selecting the right platform is about more than just digitalizing forms. You need to find a tool that actively removes administrative barriers. The best software acts as a force multiplier for your leadership by automating the most tedious parts of the observation cycle.

Must-Have Features:

- Customizable Rubrics: Ensure the tool aligns perfectly with your specific state or district framework.

- Mobile-First Design: Look for intuitive note-taking interfaces that work seamlessly on tablets or smartphones.

- Offline Functionality: Reliability is key; you need to be able to capture data even when the school’s Wi-Fi is spotty.

- Automatic Timestamping: This creates an objective timeline of the lesson without manual entry.

- One-Click Export: Instantly generate PDFs or sync data directly with district reporting systems.

Choosing the Right Platform

Choose a platform that supports both formal evaluations and informal walkthroughs in a single interface. Juggling multiple apps for different visit types creates unnecessary friction and fragmented data.

Instead, look for tag-based analytics, such as “student engagement” or “questioning strategies” that help you identify school-wide professional development trends after just a few dozen observations.

Implementation advice: Pilot the software with a small cohort of teachers for one semester. Collect feedback on usability before district-wide rollout.

Leveraging AI for Faster, Higher-Quality Feedback

AI-powered tools streamline observation data by converting raw, timestamped notes into polished drafts. This eliminates the “blank page syndrome” that often delays feedback, allowing leaders to focus on the nuances of instructional coaching rather than syntax and formatting.

While these tools significantly reduce manual writing time, professional judgment must remain the primary driver of every evaluation.

Using AI is an effective way to reclaim time, but maintaining trust and accuracy requires a disciplined approach. Here are critical guidelines for integrating AI into the workflow:

- Mandate Draft-First Reviews: Treat all AI-generated text strictly as a baseline draft; principals must conduct a human review before finalizing any report.

- Calibrate Leadership Tone: Manually edit AI outputs to ensure the feedback reflects the principal’s specific coaching voice and relational history with the teacher.

- Verify Evidence Alignment: Cross-reference automated summaries against the raw timestamped notes to ensure the AI did not hallucinate rubric alignments.

AI-generated comment banks, when aligned to your specific rubrics, can help standardize professional language while still allowing for deep personalization. Think of it as an assistant that handles the heavy lifting of organization, leaving you to provide the high-level insights that actually move the needle on student achievements

How Do You Build a Teacher Evaluation Culture Focused on Growth Over Surveillance?

Efficiency gains are fragile if teachers view evaluations as punitive. When the process feels like constant surveillance, resistance increases, scheduling friction rises, and honest reflective practice disappears.

Investigative reporting from Education Week (2018) highlights this behavioral shift: when every interaction feels like a formal evaluation, teachers actively hide classroom struggles rather than seeking coaching.

Conversely, supportive instructional leadership is a primary driver of teacher self-efficacy. Research published in Frontiers in Psychology (2025) found strong correlations ($r = 0.75$ to $0.84$) between perceived leadership support and teacher confidence.

The ultimate goal of an efficient system is to create space for coaching and professional dialogue, not just faster filing. By streamlining the administrative burden, you ensure that the process honors the learning process for both the educator and the student. Evaluation should be a catalyst for development, not just a mountain of documentation.

Communicating Purpose and Process Clearly

Hold a beginning-of-year staff meeting (late August) to explain the revised evaluation process. Many teachers have historically viewed evaluations as a bureaucratic hurdle rather than a professional opportunity.

By clearly sharing rubrics and timelines upfront, you can dismantle that perception and move toward a culture of transparency and mutual respect.

Co-create norms for classroom observations with teacher leaders:

- Will observers use laptops or tablets?

- How should students be informed about visitors?

- When (if ever) will observers speak during the lesson?

Routine, low-stakes walkthroughs with brief same-day feedback normalize observation. When teachers see you regularly for quick, supportive visits, the formal observation feels less threatening.

Explicitly state that the goal is teacher development and student achievement, not “catching” mistakes. Then align your actions consistently with that message.

Incorporating Coaching, Peer Feedback, and Self-Reflection

Link structured coaching cycles to formal evaluation so ratings naturally lead into targeted, meaningful professional development. The Identify-Learn-Improve model works well:

- Identify a growth opportunity from observation data.

- Learn through targeted PD, coaching, or peer observation.

- Improve with practice and follow-up observation.

Set up voluntary peer observation rounds each semester. Individual teachers observe one colleague and debrief using the same core rubric. This builds collective capacity and normalizes feedback culture.

Every formal observation should include a brief self-reflection form from the teacher, completed before the post-conference. Questions might include:

- What went well in this lesson?

- What would you do differently?

- What support would help you grow?

This prompts deeper dialogue and gives teachers a voice in the process.

Multi-source feedback, including from students, peers, and administrators, mirrors collaborative models seen in innovative schools. This provides a comprehensive perspective on a teacher’s performance that a single annual visit could never capture. Use this input carefully for growth purposes, keeping it separate from high-stakes employment decisions where possible.

Putting It All Together: A 90-Day Plan to Fix Your Evaluation Workflow

This section provides a concrete action roadmap. Whether you start in April, June, or August 2026, you can be ready with a transformed system before the next school year.

Start small. One grade level or department is enough for the pilot. Attempting a school-wide overhaul all at once invites failure.

Track time spent per observation before and after changes. Quantified efficiency gains build internal support for the new system and make your case to district leadership.

Phase 1 (Days 1–30): Audit and Redesign

Week 1–2: Establish your baseline

Log actual time spent on at least 10 observations, broken down by:

- Scheduling (emails, conversations, calendar work)

- Pre-conference

- Observation itself

- Write-up/documentation

- Post-conference

This data becomes your 2026 baseline. You can’t improve what you don’t measure.

Week 2–3: Gather materials and constraints

- Collect copies of current rubrics, forms, and templates.

- Review contracts and state requirements for minimum observations.

- Note non-negotiable elements that can’t change.

Week 3–4: Design the new system

- Draft a differentiated observation schedule for the coming year.

- Categorize teachers into tiers with specific frequencies.

- Select or simplify a single core rubric.

- Design aligned digital forms (collaborate with a small teacher advisory group)

- Secure leadership team buy-in (APs, instructional coaches) by presenting projected time savings

Phase 2 (Days 31–60): Pilot Tools and Protocols

Choose one team. 6th grade, the science department, or another cohesive group, to pilot everything:

- New scheduling method

- Digital walkthrough tool

- Report template

- 24-hour feedback target

Set clear pilot targets:

- Feedback delivered within 24 hours

- Total post-observation work capped at 30–45 minutes

- Teacher satisfaction with feedback quality

Hold weekly debriefs with pilot teachers. Ask about clarity, tone, perceived usefulness. Their input shapes refinements.

Adjust rubrics, forms, and timelines based on pilot data rather than waiting for perfection. Document success stories and time savings to share later with full staff.

Phase 3 (Days 61–90): Scale and Sustain

Roll out the refined process school-wide at the start of a semester. Include:

- Staff training sessions on rubrics and digital tools

- Clear communication about the differentiated schedule

- Distribution of templates and quick-reference guides

Embed observation time blocks into the master schedule for principals and APs. Treat these as protected instructional leadership time.

Set quarterly check-ins (October, January, April) to:

- Review observation data across the school.

- Adjust tier placements or support plans as needed.

- Identify common growth areas for professional development alignment.

Use analytics from your observation platform to identify school-wide patterns. If 60% of observations flag “questioning techniques” as a growth area, that’s your next professional development focus.

Periodically revisit your own workload and stress levels. Adjust delegation and processes to keep evaluations sustainable year over year.

The difference between “I’m always behind” and “I’m consistently in classrooms with timely feedback” isn’t working more hours. It’s working within a better system.

Start with your 10-observation audit this week.

Education Walkthrough simplifies the evaluation process with intuitive digital tools and automated reporting. These features empower you to provide immediate, high-quality feedback that drives professional growth across your entire campus.

Start your free trial now to simplify your workflow and maximize teacher success.

Frequently Asked Questions

How many observations per teacher per year are actually realistic?

Aim for 3 to 6 touchpoints, mixing formal and informal visits while adhering to state laws and union contracts. Differentiated models, like Tennessee’s, prioritize frequent visits for novices while reducing the burden on veterans. Start by meeting legal minimums, then add low-stakes walkthroughs as capacity allows.

Can I really trust AI tools with something as sensitive as evaluation?

AI is an assistant, not an autonomous evaluator. Humans must retain the final say on all ratings. To maintain trust, districts should implement clear usage policies, edit all drafts for tone, and involve teacher leaders in periodic audits of AI-assisted reports for bias and accuracy.

What is the best way to transition to a more efficient evaluation system?

Move away from exhaustive narratives toward structured, rubric-aligned digital tools. By standardizing the feedback loop using pre-conference forms and concise report templates, you reduce the administrative burden. This shift allows the system to focus on actionable teacher growth rather than just compliance and documentation.

How do I balance evaluation duties with everything else on my plate?

Stanford’s CEPA research suggests structured scheduling is more effective than working longer hours. Batch administrative tasks, delegate non-instructional work, and block recurring “observation mornings” on your calendar. Using concise templates and digital tools helps reclaim hours each week without extending your workday.

What if my teachers distrust the evaluation process because of past experiences?

Rebuild trust through transparency. Share rubrics and timelines upfront, and clearly distinguish coaching walkthroughs from high-stakes summative evaluations. Providing immediate, supportive feedback and honoring teacher voice in post-conferences demonstrates that the process is a partnership aimed at growth, not a tool for punishment.

How long should a post-observation conference last to be both effective and efficient?

Target 20 to 30 minutes. Focus on 1 to 2 high-leverage strengths and growth areas rather than the entire rubric. Use teacher self-reflections to jump-start the dialogue. While novices may initially require 45 minutes, these meetings should shorten as routines solidify and performance improves.